UAE

Testvox FZCO

Fifth Floor 9WC Dubai Airport Freezone

Scaling a fintech or e-commerce startup in India or the UAE is hard enough without your QA process becoming the bottleneck. Most CTOs assume the fix is straightforward: hire more testers. But the data tells a different story. Automation is now enabling lean QA teams to do the work of much larger ones, cutting regression cycles from days to hours and slashing production bugs by nearly half. This guide breaks down exactly how to make that shift, what strategies actually work, where teams go wrong, and what the numbers look like when automation is done right.

| Point | Details |

|---|---|

| Automation reduces manual QA | Startups achieved up to 90% fewer manual tests and faster regression cycles in months. |

| Core workflows drive ROI | Focusing automation on APIs and regression yields the highest value quickly. |

| Hybrid models avoid pitfalls | Combining automated and manual QA delivers reliable coverage and fewer bugs. |

| Cost savings are substantial | Automation can save more than 60% in QA costs compared to scaling teams. |

The instinct to scale QA by adding headcount made sense a decade ago. Today, it creates problems faster than it solves them. Every new tester needs onboarding, context, tooling access, and time to ramp up. In fast-moving fintech and e-commerce environments, that lag is expensive. You release faster than new hires can learn your system.

The math is also unfavorable. In India and the UAE, experienced QA engineers are in demand. Salaries are rising. Retention is a real challenge. Hiring three testers to cover a growing test suite is not a strategy. It is a recurring cost with diminishing returns.

Automation changes the equation entirely. Manual QA overhead can drop by up to 90% within 90 days when the right automation pod is in place. That is not a theoretical number. GeekyAnts documented this reduction in a real deployment. MuukTest reported regression cycles falling from 10 days to 2, with 40% fewer production bugs. Avo Bank achieved 63% cost savings by reducing QA person-days from 25 to 3 per release cycle.

These are not outliers. They reflect what happens when automation targets the right test categories.

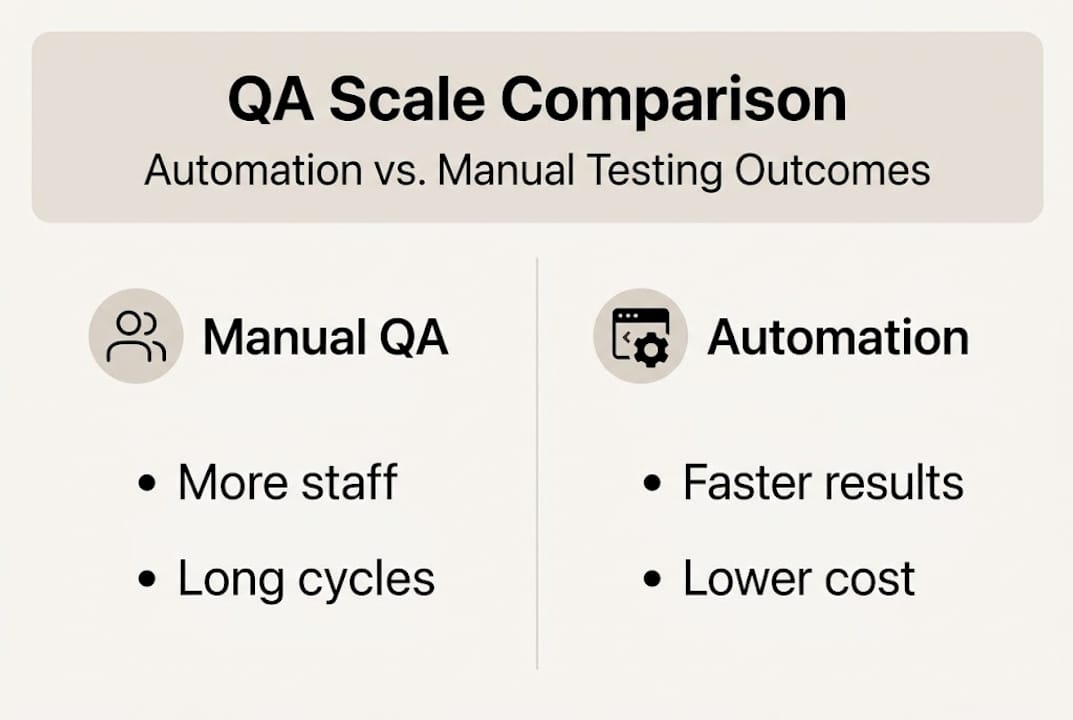

Here is what the comparison looks like in practice:

| Metric | Manual QA scaling | Automation-first approach |

|---|---|---|

| Regression cycle duration | 8 to 10 days | 2 to 3 days |

| Production bugs per release | Baseline | 40% fewer |

| QA cost per release | High and growing | Up to 63% lower |

| Onboarding time for new coverage | Weeks | Hours to days |

| Scalability | Linear with headcount | Near-exponential |

The key insight here is that automation does not just speed things up. It decouples QA capacity from team size. That is the real unlock for startups that need to grow without growing payroll.

Key reasons automation beats headcount scaling for startups in India and UAE:

If you are thinking about scaling quality with AI, the foundational argument is simple: your test suite should grow with your product, not with your org chart. Founders building AI-augmented testing for startups are already seeing this play out in production.

Knowing automation works is one thing. Knowing where to start and what to prioritize is another. Most teams that fail at automation do so because they try to automate everything at once, or they start with the wrong test types.

Here is a practical sequence that works for fintech and e-commerce startups:

The 90% manual QA reduction achieved by GeekyAnts came from systematically targeting the highest-volume, most repetitive test categories first, not from trying to automate everything.

| Test type | Automation suitability | Priority |

|---|---|---|

| Regression tests | Very high | First |

| API and integration tests | Very high | First |

| Smoke tests | High | Second |

| Data-driven tests | High | Second |

| UI and visual tests | Medium | Third |

| Exploratory and UX tests | Low | Manual only |

Pro Tip: Use AI shift left testing to catch defects earlier in the development cycle. Shifting testing left means running automated checks at the code and API level before features even reach the QA stage. This dramatically reduces the cost of fixing bugs, since defects found early are exponentially cheaper to resolve than those found in production.

Connecting automation to your DevOps in automation testing pipeline is what turns isolated test scripts into a continuous quality engine. The goal is not to automate for automation’s sake. It is to make quality a built-in property of every release, not a gate at the end.

Automation is not a set-it-and-forget-it solution. Teams that treat it that way end up with a test suite that nobody trusts. Understanding the failure modes is as important as knowing the strategies.

Flaky tests are the most common problem. A flaky test is one that passes sometimes and fails other times without any code change. The usual causes are timing issues (a page element loads slower than the test expects), UI changes that break selectors, or environment inconsistencies. The fix involves adding smart waits and retry logic, and avoiding hard-coded delays. Flaky tests from timing and UI changes are documented as the top failure mode for teams attempting high automation coverage.

High maintenance costs erode ROI. When your UI changes frequently, UI-based tests break constantly. Teams can spend 20 to 50% of their QA time just maintaining existing tests rather than building new coverage. The solution is to prioritize API and logic-layer tests over UI tests, and to use page object models or similar abstractions to make UI tests easier to update.

Over-automation undermines trust. When a test suite has too many flaky or poorly written tests, developers start ignoring failures. A test that cries wolf repeatedly gets disabled or ignored. At that point, your automation provides false confidence, which is worse than no automation at all.

“Automation improves quality and speed, but it does not reduce total testing time. It shifts that time toward exploratory and edge-case testing, where human judgment is irreplaceable.” This reflects a documented reality in QA teams that have pursued high automation coverage.

Manual testers remain essential for exploratory testing, UX evaluation, accessibility checks, and any scenario that requires human interpretation. A payment flow might pass all automated checks and still feel confusing to a real user. That kind of insight only comes from a human tester.

Pro Tip: Build a hybrid testing model from the start. Automate the repetitive, high-volume, stable tests. Keep skilled manual testers focused on exploratory, UX, and edge-case scenarios. This combination delivers better coverage and higher trust than either approach alone.

Common automation pitfalls and how to fix them:

Understanding production-led quality engineering helps here. The shift away from brittle scripts toward smarter, more resilient test architectures is what separates teams that sustain automation ROI from those that abandon it after six months. And building a long-term QA partnership rather than treating QA as a one-time project is what makes these gains compound over time.

Numbers matter when you are making the case to a board or co-founder. The good news is that the data on automation ROI is now solid and consistent across industries.

The most cited empirical benchmarks for automation show a consistent pattern: teams that implement structured automation programs see dramatic reductions in both cost and defect rates within the first quarter.

| Company/case | Metric | Before | After |

|---|---|---|---|

| GeekyAnts | Manual QA workload | 100% | 10% (90% reduction) |

| MuukTest | Regression cycle | 10 days | 2 days |

| MuukTest | Production bugs | Baseline | 40% fewer |

| Avo Bank | QA person-days per release | 25 | 3 |

| Avo Bank | QA cost | Baseline | 63% lower |

These results share a common thread. None of these teams eliminated their QA staff. They redirected them. Testers who spent 80% of their time running regression checks manually now spend that time on exploratory testing, edge cases, and new feature validation. The automation handles the repetitive work. The humans handle the judgment calls.

For fintech startups specifically, this matters enormously. Payment gateway integrations, KYC (Know Your Customer) flows, and transaction reconciliation all require both automated coverage for known scenarios and manual judgment for edge cases. You cannot automate your way through a novel fraud pattern, but you can automate the 200 regression checks that confirm your existing fraud rules still work after every deployment.

For e-commerce teams, the same logic applies to cart and checkout flows, inventory sync, and discount engine logic. These are high-volume, high-stakes flows that run the same way every release. Automation covers them reliably. Your testers focus on the scenarios that actually change.

Key business outcomes from well-implemented automation:

For teams managing distributed testing across India and UAE offices, automation also solves a coordination problem. Automated test suites run on demand, in any time zone, without requiring a tester to be online. That is a real operational advantage for startups with distributed teams.

Most automation guides focus on tools and frameworks. They tell you to use Selenium, or Playwright, or Cypress, and they walk you through setup. What they miss is the strategic layer: why you are automating, what you expect automation to do, and what it cannot do.

The teams that get the most from automation are not the ones who automate the most tests. They are the ones who automate the right tests and keep their human testers focused on the work that actually requires human judgment.

Automation improves quality and speed but does not eliminate the need for exploratory testing. In practice, this means your total testing time may not shrink dramatically. What changes is the composition of that time. Less repetition, more insight. Less regression running, more edge-case discovery.

The founders and CTOs who treat automation as a cost-cutting tool often end up disappointed. The ones who treat it as a coverage-expansion tool consistently see outsized results. The goal is not to replace testers. It is to let your existing testers do work that a script cannot do.

A hybrid QA for startups model, combining AI-driven automation with skilled manual testers, is not a compromise. It is the architecture that delivers both speed and quality at scale. Pure automation creates blind spots. Pure manual testing creates bottlenecks. The hybrid approach eliminates both.

If the data in this article resonates with where your team is right now, you are not alone. Most fintech and e-commerce startups in India and UAE reach a point where manual QA simply cannot keep pace with their release velocity.

Testvox works directly with startups and growth-stage teams to design and implement automation programs that fit your stack, your team size, and your release cadence. Whether you are building for QA for ecommerce apps or navigating the compliance and integration complexity of QA for fintech apps, we bring proven frameworks and hands-on execution. Explore fintech testing best practices or see how we approach e-commerce checkout QA to understand what a structured automation engagement looks like in practice.

Up to 90% of manual QA can be automated within 90 days using a structured automation pod approach, as demonstrated by real-world deployments. The key is targeting repetitive, high-volume, stable test cases first rather than trying to automate everything at once.

Automation improves speed and coverage but typically shifts manual testing time toward exploratory and edge-case work rather than eliminating it. Total testing time may stay similar, but the quality and depth of coverage increases significantly.

The most common failures are flaky tests from UI changes, high maintenance costs consuming 20 to 50% of QA time, and over-automating areas that require human judgment. Prioritizing stable API tests over brittle UI tests resolves most of these issues.

Yes. Scriptless and no-code platforms now allow QA engineers without programming backgrounds to build, run, and maintain automated test suites effectively, making automation accessible across the full QA team.

Let us know what you’re looking for, and we’ll connect you with a Testvox expert who can offer more information about our solutions and answer any questions you might have?