UAE

Testvox FZCO

Fifth Floor 9WC Dubai Airport Freezone

AI testing tools sound like a shortcut to bug-free releases. But even advanced AI models like SWEAgent GPT-5-mini achieve only a 17.27% fail-to-pass rate in proactive bug detection, meaning the vast majority of hidden defects still slip through. For fintech founders rushing a payments feature to market, or e-commerce CTOs scaling checkout flows ahead of peak season, that gap is not a minor footnote. It is a product liability, a compliance risk, and a revenue threat. This guide breaks down exactly how AI-powered software testing works, where it genuinely helps, and how to combine it with expert human judgment to build something your users can actually trust.

| Point | Details |

|---|---|

| AI boosts test coverage | AI-powered testing can identify bugs and security gaps that traditional scripts may miss, but results are highly dependent on robust evaluation frameworks. |

| Benchmarks reveal reliability gap | Current AI models find only a fraction of bugs proactively, emphasizing the need for layered manual and automated QA. |

| Compliance needs rule-based checks | Trace/rule-driven evaluation using documentation intent helps meet fintech and e-commerce compliance requirements. |

| Security requires multi-signal validation | Execution-based and multi-layered verification are vital as AI-only security success rates remain low. |

| Pilot AI with risk awareness | Start with small-scale, risk-aware pilots that blend AI, human QA, and outcome-based evaluation for best results. |

Traditional test automation runs pre-written scripts against known scenarios. It is reliable, repeatable, and largely blind to anything outside the script. AI-powered software testing takes a fundamentally different approach. It uses large language models (LLMs), intelligent agents, and AI copilots to reason about your software’s intent, then generate, run, and evaluate tests based on that understanding.

Here is where it gets interesting. A traditional automation tool asks: “Did the output match the expected value?” An AI testing agent asks: “Given what this feature is supposed to do, did the software actually do it correctly?” That shift from pattern matching to intent reasoning is what makes modern AI testing genuinely powerful and also more complex to implement well.

Learn more about how AI is helping software testing evolve from rigid scripted checks into adaptive, intelligence-driven quality workflows.

Core capabilities that distinguish AI-powered testing include:

Pro Tip: Not all AI testing tools operate the same way. Look for platforms where documentation-derived intent serves as the evaluation oracle, meaning the AI judges correctness based on what the software was supposed to do, not just what it actually did.

Now that we understand what AI brings to software testing, let’s explore how it actually uncovers defects and where it still falls short.

The key innovation in proactive AI testing is the concept of a “documentation oracle.” Instead of relying solely on existing test cases, the AI reads your product requirements, API documentation, or user stories, then constructs tests designed to expose gaps between intent and implementation. This is a genuine leap beyond regression-only approaches.

Here is a step-by-step view of how modern AI bug discovery works:

This pipeline is more nuanced than traditional automation, and it produces genuinely different results. The TestExplora benchmark reports a maximum fail-to-pass rate of 16.06%, which means even top models leave roughly 84% of potential defects undetected through AI alone. For a fintech app processing real money, that number carries serious weight.

Proactive vs. regression-only testing: a quick comparison

| Dimension | Proactive AI testing | Regression-only testing |

|---|---|---|

| Bug discovery method | Documentation intent and reasoning | Existing test scripts and known states |

| Detects unknown bugs | Yes, based on specification gaps | Rarely, only if pre-written |

| Setup requirement | Requires well-documented requirements | Requires maintained test scripts |

| Coverage of edge cases | High, generative by nature | Low to medium, depends on script quality |

| Human oversight needed | High, to interpret AI judgments | Medium, to maintain scripts |

| Best suited for | New features, compliance-sensitive flows | Stable, well-tested legacy code |

“Relying on code-generation success metrics as a proxy for software quality is misleading. Bug detection requires evaluation against intent, not just syntax correctness.”

This distinction matters enormously when you are scaling AI-human hybrid QA models across a growing product team. Pure automation metrics do not tell you whether your KYC flow actually verifies identity correctly or whether your cart abandonment logic handles edge cases properly. Teams that understand this difference consistently outperform those scaling QA with AI without proper oversight structures.

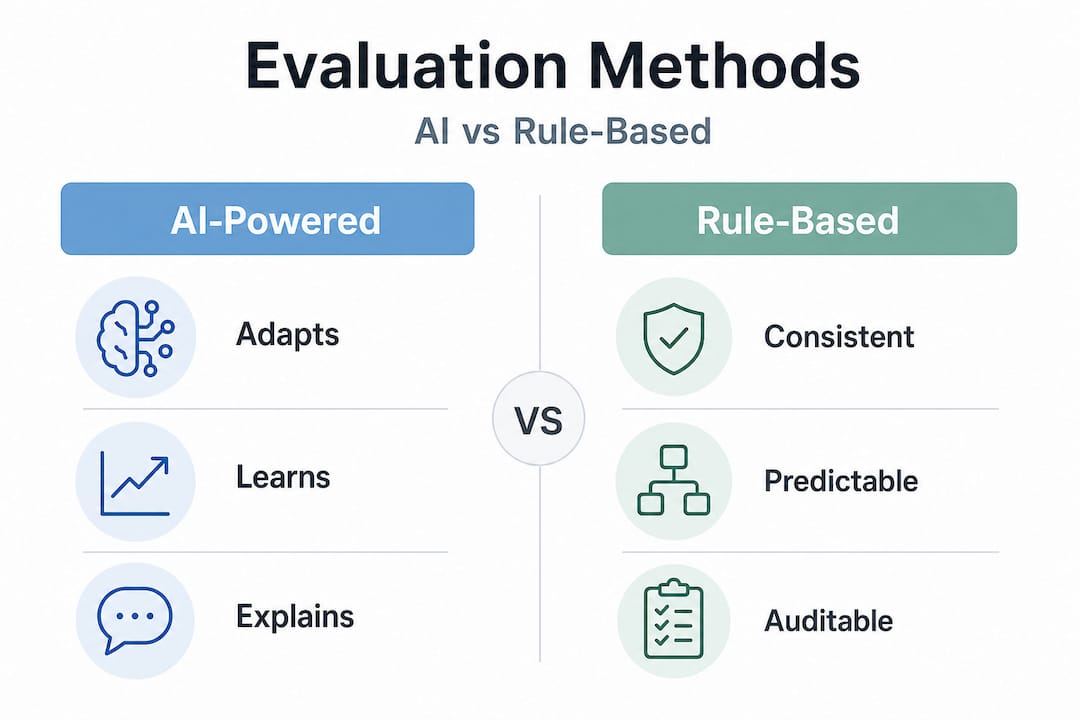

While fail-to-pass rates and classic pass/fail outcomes matter, fintech and e-commerce applications need deeper assurances, especially around compliance and complex business rules.

Pass/fail testing tells you that something broke. Trace-driven evaluation tells you why it broke and whether it violated a specific rule your product is supposed to follow. For a fintech platform, that could mean a KYC check that passed technically but failed to apply the correct jurisdiction-specific requirements. For an e-commerce platform, it could mean a discount rule that executed without error but applied the wrong tier for a high-value customer segment.

Agent-Pex parses agent prompts and execution traces to extract behavioral rules that can then be used as evaluation criteria. This approach turns invisible logic into auditable, verifiable checkpoints.

Rule categories and their detection value

| Rule type | Example | Why it matters |

|---|---|---|

| Behavioral rules | “User must be redirected after login” | Core user flow integrity |

| Security rules | “No PII in API response logs” | Data privacy and regulatory compliance |

| Business logic rules | “Discounts cannot stack beyond 30%” | Revenue protection |

| Legal and regulatory rules | “Transaction limits per RBI/DFSA guidelines” | Fines and license risk avoidance |

| Performance rules | “Checkout must complete within 2 seconds” | Conversion rate and SLA compliance |

Using multi-layered rule extraction alongside standard test outcomes gives your QA strategy a much stronger foundation. It is especially valuable in AI quality engineering programs where a single audit needs to cover both functional correctness and compliance.

Bullet list: Types of rules AI-powered evaluation should cover

Pro Tip: Use multi-layered rule extraction to avoid compliance blind spots. A tool that only checks functional outcomes will miss the security and regulatory layers that regulators and auditors actually care about in fintech and e-commerce environments.

Bug detection is just the beginning; for fintech and e-commerce, true assurance comes from robust and layered security testing. Here is why AI alone is not enough.

The numbers are sobering. The SECUREAGENTBENCH benchmark reports that only an average of 9.2% of AI agent outcomes are both correct and secure, with the best-performing model reaching just 15.2%. That means AI agents, even strong ones, produce results that are either functionally incorrect, insecure, or both, the overwhelming majority of the time.

What this means in practice: An AI security agent might fix an authentication bug but introduce an insecure direct object reference vulnerability in the same change. Or it might correctly identify a SQL injection risk but fail to verify that the remediation actually closes the attack vector.

Here is how to build layered security checks that use AI as one signal among many:

“Security reliability gaps in AI agents are persistent and real. Teams that treat AI security output as a final verdict rather than a first signal are taking on significant risk in production.”

Pairing AI-assisted scanning with shift-left AI QA strategies gives you early detection without sacrificing the depth that high-stakes sectors demand. Shift-left simply means moving testing earlier in the development cycle, catching issues when they are cheapest to fix rather than days before a release deadline.

Armed with this context, let’s outline best practices for deploying and scaling AI-powered software testing across a startup or SME environment.

The single biggest mistake teams make is treating AI testing as a plug-and-play replacement for a QA function. It is not. It is a force multiplier for a QA function that already has the right structure and judgment in place. When you add AI to a team that lacks clear requirements, documented business rules, and defined success criteria, you get fast generation of low-quality tests. Garbage in, garbage out applies here too.

A risk-aware evaluation framework for AI pilots includes:

Pilots should include risk-aware evaluation criteria rather than only measuring code generation success. This is the benchmark insight that most vendor pitches skip entirely.

Explore the top AI testing tools available today to find options that align with your stack and support the kind of documentation-intent evaluation described in this guide. The right tool choice at the pilot stage often determines whether AI testing becomes a true capability or just a buzzword on a sprint board.

Most articles on AI-powered software testing lead with the productivity wins. Faster test generation, lower manual effort, better coverage, all true. But they rarely spend time on what the benchmarks actually reveal: AI testing is genuinely powerful and genuinely incomplete, at the same time.

The uncomfortable reality is that AI models reason well about common patterns and documented behavior, but they struggle with the kind of contextual, domain-specific judgment that experienced QA engineers bring to a fintech or e-commerce codebase. A senior QA specialist reviewing a payment gateway integration knows that a technically passing transaction might still violate a regulatory cap, create a ledger inconsistency, or generate a misleading customer receipt. An AI agent, without that domain context, may never see the issue.

We see this play out consistently in complex application environments. Teams that adopt pure-AI testing programs often discover, six months later, that their coverage looks impressive on paper but misses entire categories of compliance and security issues. The metrics show green. The regulators do not agree.

The hybrid approach, where AI accelerates test generation, rule extraction, and regression coverage, and expert QA provides intent validation, domain judgment, and compliance oversight, is consistently outperforming single-track strategies. This is not a temporary limitation while AI catches up. It reflects something more structural: software quality in regulated industries requires contextual judgment that pure automation cannot replace.

The right frame is not “AI versus manual testing.” It is “AI-generated coverage plus human-validated confidence.” Teams that internalize this distinction ship better products, avoid costly post-release patches, and pass audits with far less scrambling. Browse our AI testing case studies for real-world examples of how this balance plays out in production environments.

Putting these principles into practice is where the real complexity begins, and where the right partner makes a measurable difference.

Testvox works with fintech and e-commerce teams to build AI-powered QA programs that go well beyond surface-level automation. From documentation-intent test generation to AI-powered testing solutions that integrate trace-driven rule analysis and execution-based security verification, we bring both the tooling and the expert judgment your product demands. Whether your team is preparing for a beta launch, expanding into a regulated market, or hardening a checkout flow before peak season, our fintech app testing best practices and e-commerce QA best practices give you a proven framework for shipping with confidence. Talk to our team to find out how we can accelerate your QA without cutting corners.

Proactive AI software testing detects bugs by interpreting requirements and documentation, not just reacting to failed code or existing test cases. Where traditional automation checks known scenarios, proactive AI testing generates tests from documented intent to surface defects no one thought to write a test for yet.

Even the best benchmarks show significant limitations: SWEAgent GPT-5-mini achieves a 17.27% fail-to-pass rate for proactive bug detection, and SECUREAGENTBENCH reports only 9.2% average correct-and-secure outcomes, which is why expert human oversight remains essential in critical application environments.

Focus first on tools that use documentation-intent evaluation rather than just code-generation metrics, and pair them with trace-based rule analysis and execution-verified security checks to get coverage that actually holds up under compliance scrutiny.

By extracting explicit and implicit rules from product documentation and business logic, AI-powered testing can verify that regulatory, security, and business constraints are enforced in live code. Agent-Pex demonstrates how behavioral rule extraction from agent traces can power this kind of compliance verification at scale.

Let us know what you’re looking for, and we’ll connect you with a Testvox expert who can offer more information about our solutions and answer any questions you might have?